Wider is better for astrophotography — understand the basics of bit depth and dynamic range for better astrophotos.

Before you can have a proper understanding of what it means to stretch your image, you need to be sure you understand what your image is made of: numbers. That’s it . . . lots and lots of numbers. Every pixel has a number that corresponds to how bright the pixel should appear. Small numbers are dark, and large numbers are bright. Monochrome (black and white) images have just one number per pixel; color images have three numbers, one each for the red, green, and blue contribution. Simple, right?

Bits of Data

The next thing to consider is how those numbers are represented by a computer. The answer to that is bits. The more bits, the larger the number you can represent. A single bit can be 0 or 1. Black or white. Not much variation in there. We would could call this extremely low dynamic range.

A computer represents a number as a whole string of bits, like this:

00101010

This is actually the number 42. The more bits or places you have, the more unique combinations of 1’s and 0’s are possible, and thus the more numbers can be represented. Essentially, every permutation of 1’s and 0’s is a unique numeric value. How this works is fascinating, but beyond the scope of an astrophotography blog. Note, I’m going to neglect negative numbers here, as well as something called “endianness” . . . I am not a sadist. This blog is not for people who are already engineers!

Two bits can store the numbers from 0 to 3, three bits can store the numbers from 0 to 7, and so on, with additional bits supporting exponentially larger numbers. It’s not terribly important that you understand this; just remember, more bits equals larger numbers . . . and lots more variation in tone values that can be recorded or stored.

Richard S. Wright, Jr.

The smallest memory location on a computer is 8 bits in a row, called a byte, which represents a number between 0 and 255. The number 5 may require only three bits, but it has to go in a memory location that is at least 8 bits wide. All the leading bits are just zero.

00000101 (5 in binary)

Some important numbers that come up when it comes to image data and cameras are:

8 bits can hold a number from 0 to 255.

12 bits: 0 to 4095

14 bits: 0 to 16,383

16 bits: 0 to 65,535

The more bits you have, the subtler variations in tonal values and intensities you can have, and the larger values you can store. We call the large range of values in an image its dynamic range. More dynamic range is always better when it comes time to start processing your images.

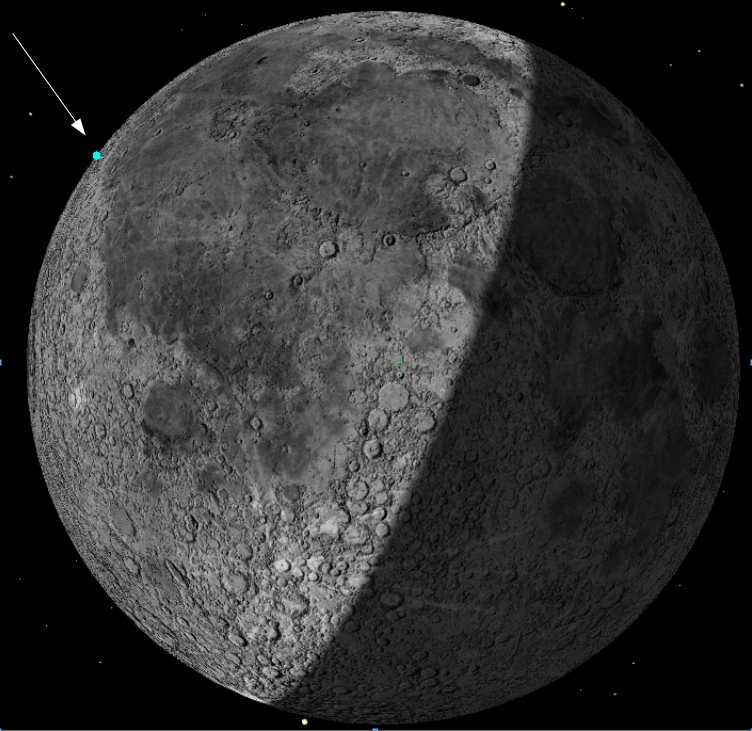

Richard S. Wright, Jr.

Data Bytes

Computers work in multiples of bytes (8 bits), so if you can’t fit a number in an 8-bit memory location, you have to use two 8-bit memory locations next to each other (or more as the numbers get even larger!). The number 256, for example, would actually consume a minimum of 2 bytes of storage, like so:

00000001 00000000

There’s an important idea here: A number that requires 9 bits is actually going to require 16 bits of storage.

Most normal images on your computer are 8-bit images, holding intensity numbers between 0 and 255 for each color channel. (Usually these are called 24-bit images, but each channel really only has 8 bits). This limit occurs because most computer displays also support only 8 bits per channel, and that way the image maps directly to your screen. Monochrome images have the same value in each color channel… so they really are JUST 8-bit images on your screen. Most raw images from a DSLR or astrophotography camera contain 16-bit pixels holding values that can technically range from 0 to 65,535, but they have to be scaled down to the 0 to 255 range before they can be displayed.

So far so good?

Decoding Bit Depth

We talked last month about how camera sensors count photons. Each pixel in a camera's detector can hold a certain number of electrons before it overflows or tops out. Regardless of how many photons a pixel can hold, they're counted using 12, 14, or 16 bits when they're read off the chip. The more bits per pixel, the more expensive it is to make the chip. This is an important factor when trying to keep costs down and also an important factor in the dynamic range in tonality that the camera can capture.

When that data is read off of most cameras, the numbers go into either one-byte containers (0 to 255, remember), or two-byte containers (16 bits, or 0 to 65,535). A camera that reads out 8-bit image values may well have a 12-bit or more sensor, but it has to scale that number down to fit into an 8-bit memory location on the computer. A 16-bit image would have each pixel value divided by 256! Many planetary cameras will return 8-bit image data to speed things up (with half the bits, there's half the data to move), while deep-sky imagers always prefer 16-bit data from their camera.

Here’s an important takeaway: Camera sensors may support just about any bit depth, but camera data on your computer is almost always 8 or 16 bits. Even if a camera produces 14-bit data (most DSLR’s do this, for example), the data would be stored as 16-bit pixels in the file.

In a raw DSLR file with 14-bit data, the leading two bits are zero; the 14 bits that represent the pixel value follow. If a pixel were completely saturated (with a value of 16,383), it would be represented like this:

0011111111111111

Now for the fun part: To display this image on a computer, we have to scale this giant number down to fit into only 8 bits, and to do this properly, we need to know data's actual bit depth. For a 14-bit camera, we’d divide the pixel values by 64. If we didn’t know that the data was stored in 14-bit pixels, we might try and scale the full range of 65,525 down to 255, and we’d divide the number by 256! This would compress and darken our image, as the pixel values would range from 0 to 64 instead of the full range from 0 to 255. The result would be a very dark image (assuming it was well-exposed to begin with).

For even more fun, many cameras will read out, say, 12- or 14-bit values from the sensor, but then scale them up to make them range from 0 to 65,535. On some CCD cameras, the camera gain may serve this additional purpose.

The automatic scaling works well and requires little user intervention when an image is exposed with plenty of light. Alas in astrophotography, most of our pixel values are small to begin with, and we have to brighten the data somehow. Brightening images can be done linearly or non-linearly, and we’ll get to that next month.

About Richard S. Wright Jr.

Contributing Editor Richard S. Wright Jr. is a software developer by profession specializing in computer graphics technologies at LunarG Inc. Richard is also a consulting engineer and imaging specialist for Software Bisque. A lifelong amateur astronomer, Richard first experimented with a webcam and black-and-white film images of the Moon in the 1990s, and he subsequently became hopelessly addicted to astrophotography. Currently, he seeks treatment at his dark sky camp/observatory in Okeechobee, Florida, whenever he can. Check out his online photo gallery.

3

3

Comments

John-Murrell

March 15, 2019 at 4:41 pm

As well as what happens in displaying the images you need to consider how many bits the image format you use to save the files has. JPEG files are 8 bits as are several other formats. There are some versions of other files such as TIFF that will store 16 bits as well as DSLR RAW files. Remember that storing an image with more than 8 bits as an 8 bit image requires that some of the bits are thrown away and once this has been done they cannot be recovered.

You must be logged in to post a comment.

madrobin

October 13, 2019 at 3:32 pm

Hi Richard,

I just purchased your latest book "Evening Show". It's got some really fantastic astrophotos. I was expecting to see some pages on data processing and was a bit disappointed to not see that section. Hopefully you will come up with another book on that aspect soon as your blogs show you are very good writer and has a huge ability to explain break down difficult concepts to ordinary mortals.

Thanks.

You must be logged in to post a comment.

Richard S. Wright Jr.Post Author

October 14, 2019 at 10:56 am

Thanks for your support! Yes, that book is a picture book that explains astrophotography to the layman, or the family members of imagers. I have plans to write a book more targeted for astrophotographers themselves, but that is going to still be a good while in the making.

You must be logged in to post a comment.

You must be logged in to post a comment.