Nearly 11 billion years ago, the universe churned out stars at a rate 10 times greater than today. And yet all the starlight in the cosmos appears no brighter than a 60-watt light bulb seen from miles away.

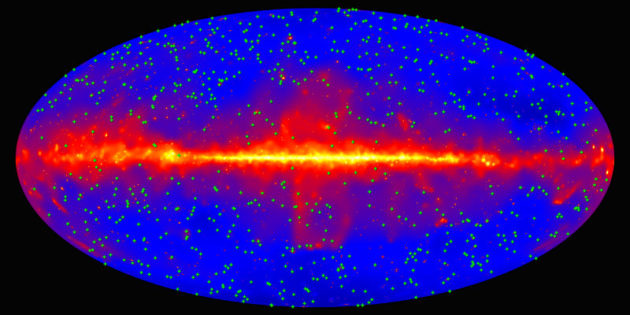

NASA / DOE / Fermi-LAT Collaboration

Four thousand octillion octillion octillion — or 4x1084. That’s roughly the number of ultraviolet, visible, and near-infrared photons emitted by all the stars in all the galaxies throughout time, according to a new estimate.

By tallying all these photons of starlight, astronomers have pieced together an updated timeline of how the rate of star formation has waxed and waned over the 13.8 billion years since the Big Bang, astronomers report November 30th in Science. This new timeline jibes with earlier independent estimates: the cosmic star-forming factories were at their most prolific about 3 billion years after the Big Bang and have been gradually slowing down ever since.

The vast majority of starlight that escapes galaxies contributes to a haze of photons known as the extragalactic background light. This light is tricky to measure directly. So an international team of researchers known as the Fermi-LAT collaboration looked at how this light interferes with gamma rays emitted from blazars, wellsprings of radiation powered by supermassive black holes at the cores of galaxies.

NASA / Sonoma State University / Aurore Simonnet

To make their tally, researchers analyzed the gamma-ray light from 739 blazars (and one gamma-ray burst) collected over 9 years by the Fermi Gamma-ray Space Telescope. The light from these blazars took anywhere from 200 million to 11.6 billion years to reach Earth. By measuring how specific wavelengths of gamma-ray light from each blazar have been suppressed, the team estimated how the intensity of the extragalactic background light has varied over time, which in turn revealed the total number of these photons swarming about the universe.

While 4x1084 photons sounds like a lot, it’s about one ten-thousandth of the number of photons from the cosmic microwave background. And given the vastness of the universe, the glow from all this starlight is actually quite feeble. It’s “equivalent to a 60-watt light bulb observed from a distance of 2.5 miles,” says co-author Marco Ajello (Clemson University).

At any given time, this background light reflects the glow of all the stars that existed up to that point. This allowed the researchers to reconstruct the rate of star formation across most of cosmic time. Other astronomers have previously pieced together this history by directly measuring ultraviolet light from short-lived massive stars, though this approach likely misses the swarms of relatively faint galaxies that populated the universe during its first billion years or so.

This new measurement of the star formation rate matches those earlier estimates. The rate peaked nearly 11 billion years ago, when every cubic megaparsec of space — a volume just over 3 million light-years on a side — converted about 0.1 solar masses of gas into stars every year. That’s about ten times greater than the current rate.

To put that into perspective, our galaxy produces about seven stars every year, says Ajello. But back then, the Milky Way would have churned out about 70 stars each year. “Our galaxy and the entire universe were lit up like a Christmas tree,” he says.

Reference:

The Fermi-LAT Collaboration. “A Gamma-Ray Determination of the Universe’s Star Formation History.” Science. November 30, 2018.

0

0

Comments

You must be logged in to post a comment.