The Event Horizon Telescope creates the image of a black hole shadow thanks to the precise coordination of a worldwide telescope network. Here’s how they do it.

Event Horizon Telescope Collaboration / Astrophysical Journal Letters, 875(2019) L1 / CC BY 3.0

On April 10th, scientists delighted the world by unveiling an image of the black hole at the center of the distant galaxy M87. The shadow is wreathed by the light from hot gas just outside the black hole, which whirls around the invisible beauty.

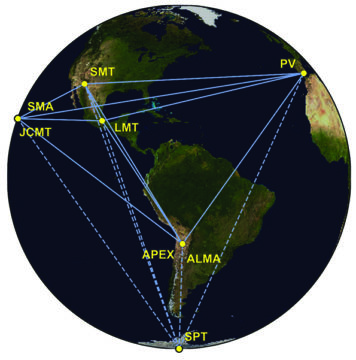

To create this image, an international team combined observations from radio telescopes spread across the globe, from Hawai‘i to Spain and Arizona to Chile. The dishes observed simultaneously, effectively acting as a virtual, planet-size dish, giving them a resolving power equivalent to being able to see a hydrogen atom at arm’s length.

But how do you build a virtual telescope?

The answer is a technique called very long baseline interferometry. VLBI is actually not new; radio astronomers have been using it for decades. But no one before has ever achieved VLBI with a frequency able to so stunningly invade a galaxy’s innermost sanctum.

The basic principle of interferometry is this: take two telescopes, separated by some distance, and observe an object simultaneously with both telescopes. Light comes from the object as a wavefront, like ripples in a pond created by splashing ducks. The two telescopes will catch a slightly different part of each wavefront. Account for that delay, then carefully add the data together, and you can measure the object's structure with the resolution you’d have from a telescope that is the size of the distance between the two dishes.

But when observing something with structure on a variety of scales, things get complicated — it’s like having a flock of ducks cavorting in the pond, their waves interacting and changing the pattern in complex ways. In order to reconstruct the image, you need a detailed understanding of how the radio waves are augmenting or canceling one another out as they travel to the dishes. The solution is a bunch of telescopes with different separations, which enable you to mix and match the pairs and detect structures of various sizes and orientations. That’s why the Event Horizon Telescope isn’t just two scopes at opposite ends of the world: They need a variety of separations, or baselines, in order to fill in the image.

The animations below by graduate student Daniel Palumbo (Harvard) show how the Event Horizon Telescope’s VLBI network works. On the left is Earth as seen by M87’s black hole. As the world turns, different telescopes come into view. Each forms a pair with the others, their baselines drawn in red.

Now look at the right panel. This graph plots the distance between the telescopes divided by the wavelength observed. The x-axis is east-west; the y-axis is north-south. Farther from zero corresponds to a larger separation and, thus, a finer resolution. The zero point at center is the largest scale, what you’d see with only one telescope.

Each pair of dishes plots two dots on this graph, because you can “read” their separation two ways — for example, Chile to Spain or Spain to Chile. As Earth turns, the position of the two sites relative to each other as seen by the black hole changes: The telescope in Mexico, for example, crosses more distance from left to right compared to the ones in Chile. This rotation changes both the east-west/north-south length and the orientation of the baselines, which is why the points trace arcs across the plot with time.

How much of this swirly graph, called the UV plane, is filled in tells astronomers the range of scales their VLBI network can detect. If the UV plane were completely filled in with red, we’d have a complete image. That, however, would take far more telescopes than we have — the EHT basically used every radio telescope on the planet that could observe at 1.3 mm (230 GHz), and there’s still a lot of white space here.

Instead, the scientists use sophisticated computer algorithms to fill in the blanks and reconstruct the image. To make that possible, the team installed atomic clocks at each telescope, devices so dependable that they’ll only lose 1 second in 10 million years. These clocks mark the observations with exact time stamps. The researchers then fly the hard drives with these time-stamped data back to their supercomputers and combine, or correlate, the many sites’ observations, aligning them to within trillionths of a second. Only then can they start reconstructing what the black hole’s shadow looks like.

Here are the same Earth and UV plane panels, this time joined by the image of the black hole’s shadow they reveal. As Earth turns, more data on different scales are added, and the image’s sharpness evolves.

For the full-fledged duck analogy for VLBI, watch this talk by Michael Johnson (Center for Astrophysics, Harvard & Smithsonian). If you’re curious about how the image reconstruction works, Katie Bouman (also CfA) has done a nice TED talk explaining one of the algorithm methods.

Note in the animations above that the South Pole Telescope is out of range. The EHT team uses it to observe the Milky Way’s central black hole, Sagittarius A*, and also used it to detect a calibrator source for the M87 observations. For the VLBI network as seen by Sgr A*, check out this simulation by Laura Vertatschitsch (now Systems & Technology Research). Some of the sites shown are different than the ones involved in the April 2017 campaign, but the concept is the same.

2

2

Comments

fif52

April 16, 2019 at 6:11 pm

Went through their Ted talk about image reconstruction. There were a number of points worth noting. They are;

Smallest size = wavelenght/telescope size.

Difficulty imaging moon with current capabilities.

Quote, "there is an infinite number of images possible".

Puzzle pieces, 'what images look like everyday'.

Their everyday images as a guide for analysing the data was confusing. Simply, there are two images one is likely and one isn't, this then gets whittled down and you get the last image. This ends up being the image. This is different some what from other recent images mostly following Interstellar's version of a black hole.

Is this the correct version or are both correct?

As with visible size, it's questionable what sort of impact the ELT shall have on optical wavelengths? But as with other people, waiting and anticipating for improvements on current capabilities or at least an artist impression based on data.

You must be logged in to post a comment.

Lou

April 17, 2019 at 11:10 am

Both are correct, but M87* is only 40-something microarcseconds across and the EHT has a resolution of 20-something microarcseconds, so there are too few pixels to resolve the Interstellar disk features.

You must be logged in to post a comment.

You must be logged in to post a comment.